Traditional data centers only stored, retrieved and processed data. In the generative and agentic AI era, these facilities have evolved into AI token factories. With AI inference becoming their primary workload, their primary output is intelligence manufactured in the form of tokens.

This transformation demands a corresponding shift in how the economics of AI infrastructure, including total cost of ownership (TCO), is assessed. Enterprises evaluating AI infrastructure still too often focus on peak chip specifications, compute cost or floating point operations per second for every dollar spent, aka FLOPS per dollar.

The distinction that matters is this:

- Compute cost is what enterprises pay for AI infrastructure, whether rented from cloud providers or owned on premises.

- FLOPS per dollar is how much raw computing power an enterprise gets for every dollar spent, but raw compute and real-world token output are not the same thing.

- Cost per token is an enterprise’s all-in cost to produce each delivered token, usually represented as cost per million tokens.

The first two are merely input metrics. Optimizing for inputs while the business runs on output is a fundamental mismatch.

Cost per token determines whether enterprises can profitably scale AI. It’s the one TCO metric that directly accounts for hardware performance, software optimization, ecosystem support and real-world utilization — and NVIDIA delivers the lowest cost per token in the industry.

What Are the Factors That Lower Token Cost?

Understanding how to optimize token cost requires looking at the equation for calculating cost per million tokens.

In this equation, many enterprises evaluating AI infrastructure focus on the numerator: the cost per GPU per hour. For cloud deployments, this is the hourly rate paid to a cloud provider; for on-premises deployments, it’s the effective hourly cost derived from amortizing owned infrastructure. The real key to reducing token cost, however, lies in the denominator: maximizing the delivered token output.

That denominator carries two business implications.

- Minimize token cost: When this increase in token output is reflected through the cost equation, it drives down cost per token, which is what grows the profit margin on every interaction served.

- Maximize revenue: More tokens delivered per second also translates to more tokens per megawatt, which means more intelligence to use in AI-powered products and services, generating more revenue from the same infrastructure investment.

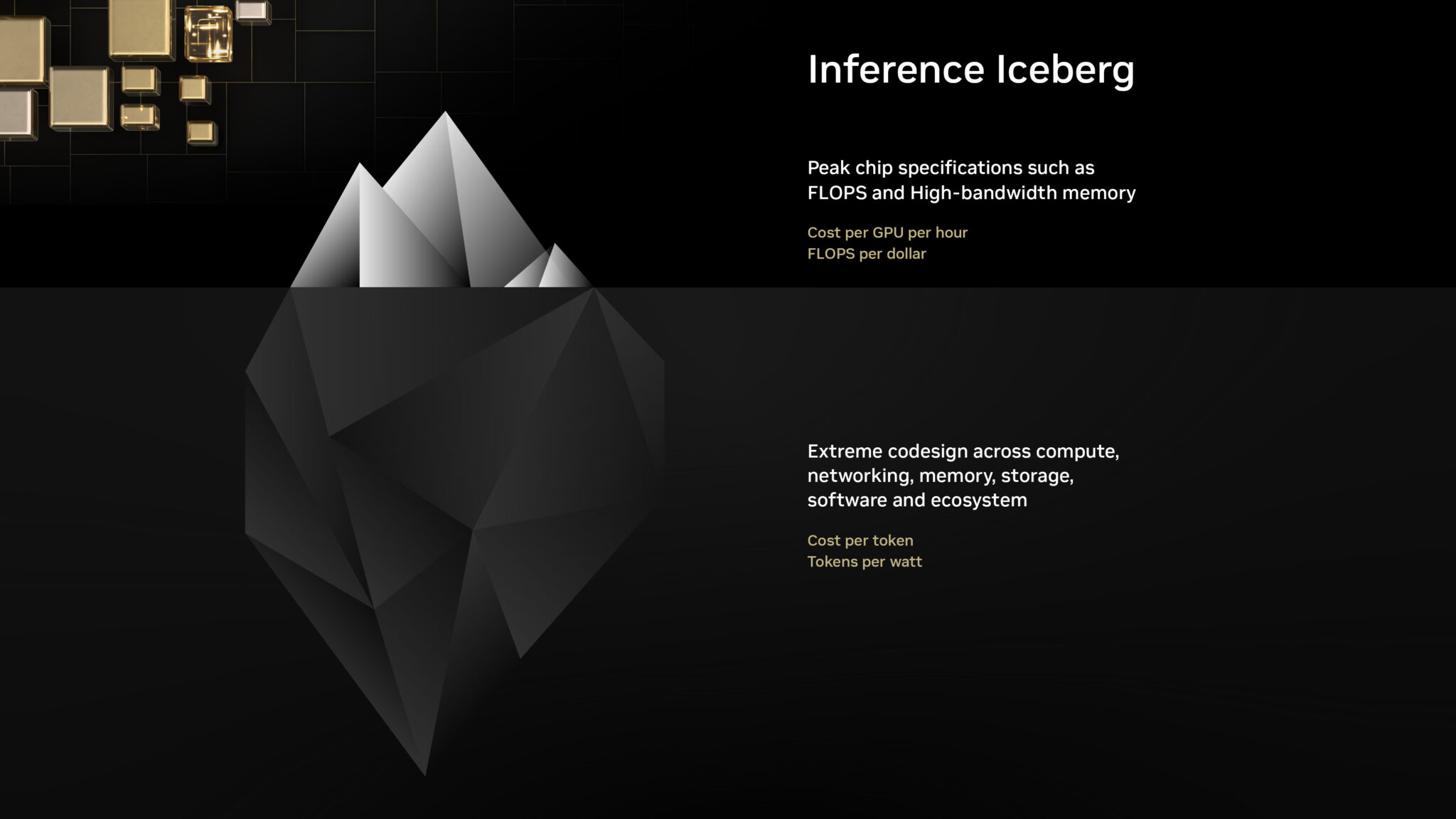

So focusing only on the numerator means missing what drives the denominator. Think of it as an “inference iceberg”: The numerator sits above the surface, visible and easy to compare. The denominator is everything beneath the surface, which represents key factors that determine real-world token output. Accurately evaluating AI infrastructure starts with asking what lies beneath.

- Surface-level inquiry:

- What is the cost per GPU hour?

- What are the peak petaflops and high-bandwidth memory capacity?

- What are the FLOPS per dollar?

- In-depth cost analysis:

- What is the cost per million tokens? Specifically, what is the cost per million tokens for large-scale mixture-of-experts (MoE) reasoning models, which represent the most widely deployed type of AI models?

- What is the delivered token output per megawatt? For on-premises deployments especially, where capital commitment to land, power and infrastructure is substantial, maximizing intelligence produced per megawatt is critical.

- Can the scale-up interconnect handle the “all-to-all” traffic of MoE models?

- Is FP4 precision supported? Can the inference stack make use of FP4 while maintaining high accuracy?

- Does the inference runtime support speculative decoding or multi-token prediction to increase user interactivity?

- Does the serving layer support disaggregated serving, KV-aware routing, KV-cache offloading and other optimizations?

- Does the platform support the unique workload requirements of agentic AI — including ultralow latency, high throughput and large input sequence lengths?

- Does the platform support the full lifecycle, from training and post-training to high-scale inference, across all model architectures, to ensure infrastructure fungibility and high utilization?

Every one of these algorithmic, hardware and software optimizations must be active and integrated, or the denominator collapses. A “cheaper” GPU that delivers significantly fewer tokens per second results in a much higher cost per token. AI infrastructure that gets it right across the full stack ensures that every optimization enhances the others.

Why Does Cost per Token Matter Much More Than FLOPS per Dollar?

The following data for the DeepSeek-R1 AI model demonstrates the difference between theoretical and actual business outcomes.

Looking at compute cost alone, the NVIDIA Blackwell platform appears to cost roughly 2x more than NVIDIA Hopper — but compute cost says nothing about the output that investment buys. An analysis of mere FLOPS per dollar suggests a 2x NVIDIA Blackwell advantage compared with the NVIDIA Hopper architecture. However, the actual outcome is orders of magnitude different: Blackwell delivers more than 50x greater token output per watt than Hopper, resulting in nearly 35x lower cost per million tokens.

| Metric | NVIDIA Hopper (HGX H200) | NVIDIA Blackwell (GB300 NVL72) | NVIDIA Blackwell Relative to Hopper |

|---|---|---|---|

| Cost per GPU per Hour ($) | $1.41 | $2.65 | 2x |

| FLOP per Dollar (PFLOPS) | 2.8 | 5.6 | 2x |

| Token Output per GPU | 90 | 6K | 65x |

| Token Output per MW | 54K | 2.8M | 50x |

| Cost per Million Tokens ($) | $4.20 | $0.12 | 35x lower |

Note: Data is sourced from NVIDIA analysis and the SemiAnalysis InferenceX v2 benchmark.

This massive divergence proves NVIDIA Blackwell delivers a massive leap in business value over the earlier Hopper generation that far outpaces any increase in system cost.

How to Choose the Right AI Infrastructure

Comparing AI infrastructure based on compute cost or theoretical FLOPS per dollar isn’t just insufficient; it doesn’t provide an accurate representation of inference economics. As the data demonstrates, an accurate evaluation of AI infrastructure’s revenue potential and profitability requires a shift from input metrics to cost per token and delivered token output.

NVIDIA delivers the industry’s lowest token cost and highest token throughput through extreme codesign across compute, networking, memory, storage, software and partner technologies. Moreover, the constant optimization of open source inference software such as vLLM, SGLang, NVIDIA TensorRT-LLM and NVIDIA Dynamo built on the NVIDIA platform means that on existing NVIDIA infrastructure, token output continues to increase and the cost per token continues to decline long after it’s acquired.

Leading cloud providers and NVIDIA cloud partners are already delivering this advantage at scale. Partners such as CoreWeave, Nebius, Nscale and Together AI have deployed NVIDIA Blackwell infrastructure and optimized their stacks to bring enterprises the lowest token cost available today, with the full benefit of NVIDIA’s hardware, software and ecosystem codesign behind every interaction served.